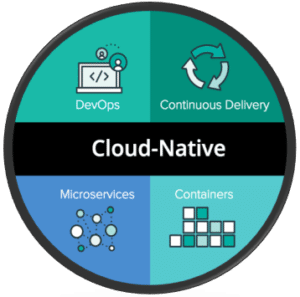

Cloud-native — main tenets (Img src: https://pivotal.io/cloud-native)

This song seems to get a lot of airplay because even I have heard it and I am usually tuned into an 80’s station. (which I refuse to call an Oldie’s station btw…). Often when I am faced with conflicting points of view or goals I do try to find a middle ground, something difficult in today’s highly-charged politically climate, but I would hope easier to do in the intersection of software development and cybersecurity, But, it’s proving to be almost as difficult in today’s cloud-native application world.

See, if you’re a defense contractor providing software development, cybersecurity, and program management services to the DoD you will be receiving what sounds like pretty straight forward counsel and guidance, I’ll summarize as:

- We are going to a cloud computing environment. The IC did their C2S cloud so we are going to do JEDI. Plan to be building future applications and software in ‘the cloud’.

- Cybersecurity is of paramount importance. Be sure to follow your RMF and use NIST 800-53 controls throughout your applications so they can get an ATO and we can use them.

So why can’t we just meet in the middle? I’m losing my mind just a little…

There is an obvious meet in the middle. Lift and shift your application from its traditional bare-metal implementation and put it in the cloud in a series of reserved VM instances. Take your previous security controls that looked like firewalls and VPNs and such and put their virtual equivalent ‘around’ your application and lockdown ingress/egress pathways, verify ACLs, document your NIST Compliance Checklist and Site Security Plan, run some drills, fix some gaps, repeat.

The problem is that in a cloud world this is oftentimes very expensive. Especially for applications that are not 80%+ utilized all the time. Buying a reserved VM instance can be several hundred dollars a month and some applications take 10-20 VMs, plus the security controls take a few more, yet the application may only be used 5-10% of the time – granted when it is needed it is critical and important and necessary and every other adjective we want to throw at it to imply that when we need it we NEED it.

But simply put recreating a traditional bare-metal enterprise architecture in the cloud with the same traditional controls that NIST/DoD expects to see is not a cost-effect or operationally sound idea.

Cloud Breaks Legacy Security Models

Cloud consumption models and specifically their billing practices around serverless and container applications result in more event-driven architectures that are designed to scale-up under load and scale-down as load eases. Billing cycles are down to measuring the most recent 100msec of utilization to determine accurate billing. So being able to quickly spin up resources when needed and quickly kill them off when unnecessary is critical to managing to budget.

Additionally, many of the methods for orchestrating workload scale use ephemeral IP addresses. This is great for not having to care about the mess of infrastructure underneath your pristine code, but when these IP addresses are reused you run into a challenge which is all those legacy security appliances you deployed to meet those NIST SP 800-53 controls are now rendered ineffective or downright useless as they use the IP address as the primary key for the user and workload identification.

So, what is a contractor to do with Cloud Native applications?

Well, this is the topic of the lunch we are hosting Wednesday at the Navy Gold Coast event in San Diego, so if you are really interested in learning more shoot me an email and I’ll get you an invite. (and the chow should be halfway decent and certainly better than convention center food court meat by-products too)!

But notwithstanding my shameless plug for our lunch Wednesday there are a few things that can be done that are worth noting:

- Cloud-native application development is largely predicated on using smaller bite-sized chunks of computing. These are often packaged into containers or serverless application instances.

- The connections and operation of an application is defined by well-formed and version-controlled APIs between these disparate functions. Managing your APIs and API keys is a critical component.

- Deploying a system to secure your application that is not dependent on the underlying IP address space but instead understands the actual identity of a user, of a service, or an application, of a resource, etc and then uses that identity as the abstraction for policy application is going to be critical.

- Allan Friedman at the Department of Commerce has been pushing an industry effort to focus on Software Supply Chain – vendors and application developers should provide a bill of materials to any consumers that states what software and source versions were used to create a given application. This will be critical in understanding exploit inheritance and is an effort I would recommend anyone building mission-critical applications consider in their development plan.

The contractors who figure this out an apply these principals to application development over the next five-year cycle in DoD are likely to:

- Be able to provide faster application development, better outcomes, and quicker iterative development cycles for the end-user

- Deliver their application at a markedly lower cost than a competitor not taking advantage of cloud-native infrastructure

- Have technical volumes that strongly differentiate their solution when analyzed through a risk, compliance, and cybersecurity lens.

Some tools I have played with and spent some time learning about that you may find interesting in this space are Kubernetes, Istio, Aporeto, Tigera, TwistLock, and Heptio.

Happy Hunting!